Overview of strategy

-

Only stake on games from round 5 onwards (due to models historic performance in early rounds and specific features which are driving the model precision)

-

Only stake on games in which the models perceived probability is greater than the bookmakers implied probability and on the condition that the perceived probability is greater than the average of the models false positive perceived probability (FPpp)

-

Stake using a single bookmaker (in this case Sportsbet)

-

Use a fixed stake of 5% of the current bank

-

Do not stake using any exotic bet types (such as accumulators, multi bets etc) and stake on simple head to head bets offered by the bookmaker (N.B. Sportsbet only offers head to head bets without draws (ie you cannot bet on a the outcome of an NRL match to be a draw)

The odds are NOT in your favour

Introduction

The following outlines the staking strategy I will be using for the 2016 season. The staking strategy is specifically tuned to the performance of the chosen predictive model and the detail and reasoning is presented.

This is the sad reality:

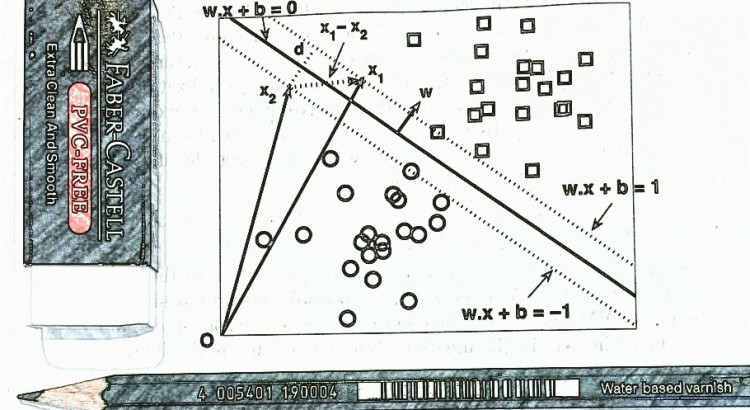

You have come up with a model (mathematic/clairvoyant or other) which can predict the outcome of matches over a season with >50% accuracy, Great! However, this does NOT mean you will be able to achieve profit. In fact it is most probable that you will lose money even if your predictive power is >50%. Although the following might come off as a little heavy on the technical speak, all I am trying to say is that in order to make a profit, you need to have a model which is able to predict winners with greater precision than the implied odds or have a model which is able to optimise staking on true positive results and mitigate staking on true negative results. This is much more difficult than simply predicting who will win a match. It also identifies one of the issues with perceived probabilities generated from machine learning algorithms – which is that they generally don’t reflect the true [*] probability of an event occurring (which is BAD if we are using these probabilities for staking).

[*] Of course no one knows the true probability of an event occurring; however some probabilities more closely reflect ‘reality’ than othersAs discussed earlier, evaluation/performance measures of a two class classification model are calculated or derived using the models true positives/negative and false positives/negative results. While all of these measures are important in determining the predictive power of a model, some are of greater importance to us when evaluating if a model can generate long term profit. Specifically, when we back a team to win in a head to head match and lose, it hurts! (our dignity and wallet). In our predictive model evaluation, these instances are called ‘false positives’ (basically when the model tells us to back a team but the actual result is a loss). So it’s really important for our model to have as few false positives as possible (and of course to have as many true positives as possible). Without getting too technical, recall that the evaluation measure precision (sometimes called positive predictive value) is derived by dividing the true positives by the sum of true positives and false positives (TP/TP+FP). Because higher precision means fewer false positives this measure becomes quite predictive of how the model will perform in making a profit. In addition, because we are dealing with head to head staking (where we can only stake on the team to win), we are exclusively dealing with positive predictions (positive class = win). This means that model and staking evaluation is done primarily using true positives and false positives because there will be no true negative (and hence no false negative) results. The following outlines some simple measures one can use to evaluating if a predictive model has the potential to make profit.

Evaluating the models potential for profit

These are the facts (keeping it very simple):

- For a head to head match where there are no lay bets (ie you can only back the winner):

- If a bookmaker offered evens on every match (backing a winner at odds = 2.0, implied probability = 0.5) and your model had a precision across all stakes made of 50% then you would break even (profit/loss = $0)

- You can only make a long term profit using a fixed staking strategy if your models precision is greater than the implied probability offered by the bookmaker over the number of matches on which you have staked (ie where the model has predicted the positive class and you have staked on its recommendation). This measure can be termed the models potential for profitability (MPP) where the higher the models precision is over the implied probability the greater the MPP.

- If precision is less than the implied probability offered by the bookmaker in the same scenario, it may still be possible to make a profit using proportional staking strategies but only if the conditional potential for profitability (MCPP) is positive. If the MCPP is positive, the models perceived probabilities are said to be well calibrated. If a model’s MCPP is negative then it may be possible to (re) calibrate the perceived probabilities using regression or classification techniques.

- If both MPP and MCCP are negative and the models perceived probabilities cannot be calibrated so that MCPP is positive then the model cannot make a profit

- Therefore; in addition to having a model with high precision you must calculate and understand the MPP and MCPP of the predictive model utilising historic odds information. A model with high precision but negative MCPP will lose money using a proportional staking strategy.

So if MPP and MCPP are so important, what the heck are they? Well they are simple measure/s to quickly evaluate your model to determine if you can actually make money or if you need to go back to the drawing board

The measure of the models potential for profitability (MPP) can be calculated by:

Predictive models average Precision – Average Implied probability

Expressed as

MPP = mp – P(A)

where

mp = the average precision of a predictive model

P(A) = the average of the implied probability

If this is positive then you have the potential to make a profit from fixed staking. The higher this measure the greater the potential for profit (using any staking strategy). If this measure is negative then you cannot use a fixed staking strategy and expect to make long term profit

If this measure is negative, it may still be possible to make a profit IF the models conditional potential for profitability (MCPP) is positive.

The conditional potential for profitability (MCPP) can be calculated by:

(Perceived probability of true positives – Implied probability of true positives) + (Implied probability of false positives – perceived probability of false positives)

Expressed as:

(P(ATP) – P(BTP)) + (P(BFP) – P(AFP))

Where:

P(ATP) = the average perceived probability of true positive events

P(AFP) = the average perceived probability of false positive events

P(BTP) = the average implied probability of true positive events

P(BFP) = the average implied probability of false positive events

If MCPP is positive we can say that the perceived probabilities are well calibrated against the implied probabilities and there is potential to make profit utilising a proportional staking strategy even if MCP is negative. If both MCP and MCPP are negative, then the predictive model cannot be profitable. When evaluating MCP and MCPP results we can infer:

MPP positive => Potential for profit using fixed staking or proportional staking

MPP negative & MCPP positive -> Potential for profit using proportional staking

MPP positive and MCPP negative -> Potential for profit using fixed stake or proportional staking (because precision of the model will be higher than the average perceived probability)

MPP & MCPP negative -> No potential for profit

Lets have a look at how this works in practice.

MPP example

If the average of the bookmaker odds over the term of your investment is 1.67 (ie implied probability of 60%), then you need a model precision of 60% to break even. Ie if you have an MPP of 0 you will break even.

Proof:

Given:

- 10 matches

- fixed stake (fs) of $10 on each match

- odds ~1.67 (equivalent to implied probability of 60%)

- 60% Precision (6 correct picks (true positives (TP)) and 4 incorrect picks (false positives (FP))

Profit under the given scenario

= profit gained from correct picks (true positives) – loss from incorrect picks (false negatives)

= ((odds * fs * TP) – (stake * TP)) – (fs * FP)

=((1.67*10*6) – (10*6)) – (10*4)

=(100-60) – 40

=40-40

=0

Yup looks like it holds true.

If under the same conditions your model precision was 70% MPP would be 0.7-0.6 = 0.1. Since this is positive we expect that we will make a profit. Lets check:

profit = profit gained from correct picks (true positives) – loss from incorrect picks (false negatives)

= ((odds * fs * cp) – (stake * cp)) – (fs * FP)

=((1.67*10*7) – (10*7)) – (10*3)

=16.7

Great so all we need to do to quickly check if our model has the potential to make profit is to calculate MPP. Great! but what if MPP is negative?

Well that’s when we need to look at MCPP. This measure basically looks at the difference in our false positive and true positive perceived probabilities in reference to the implied probabilities. The idea is to determine if we can overcome lower model precision and still make profit by optimising staking on true positives and mitigate staking on true negatives.

Stay tuned for more detail on the taking strategy